Update! From Windows 1809 onwards this is no longer an issue!

See 6 Things You Can Do with Docker in Windows Server 2019 That You Couldn't Do in Windows Server 2016

- Objectives of this Docker Home Media Server. One of the big tasks of a completely automated Media server is a media aggregation. For example, when a TV show episode becomes available, automatically download it, collect its poster, fanart, subtitle, etc., put them all in a folder of your choice (eg. Inside your TV Shows folder), update your media library (eg. On Plex) and then send a.

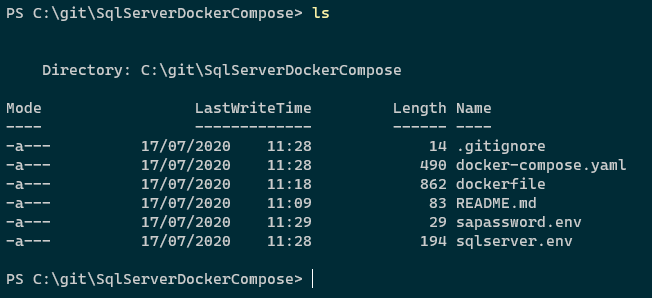

- Compose installation on Windows Server 2016 (Docker EE for Windows only) Start the PowerShell as an administrator and run the following command to start the download of the Compose binary files from the GitHub repository.

- $ docker-compose version docker-compose version 1.9.0, build 2585387 docker-py version: 1.10.6 CPython version: 2.7.12 OpenSSL version: OpenSSL 1.0.2h 3 May 2016.

You use Docker volumes to store state outside of containers, so your data survives when you replace the container to update your app. Docker uses symbolic links to give the volume a friendly path inside the container, like C:data. Some application runtimes try to follow the friendly path to the real location - which is actually outside the container - and get themselves into trouble.

The current implementation relies on Samba Windows service, which may be deactivated, blocked by enterprise GPOs, blocked by 3rd party firewalls etc. Docker Desktop with WSL 2 will solve this whole category of issues by leveraging WSL features for implementing bind mounts of Windows files. See 6 Things You Can Do with Docker in Windows Server 2019 That You Couldn't Do in Windows Server 2016 You use Docker volumes to store state outside of containers, so your data survives when you replace the container to update your app. Docker uses symbolic links to give the volume a friendly path inside the container, like C: data.

This issue may not affect all application runtimes, but I have seen it with Windows Docker containers running Java, Node JS, Go, PHP and .NET Framework apps. So it's pretty widespread.

You can avoid that issue by using a mapped drive (say G:) inside the container. Your app writes to the G drive and the runtime happily lets the Windows filesystem take care of actually finding the location, which happens to be a symlink to a directory on the Docker host.

Filesystems in Docker Containers

An application running in a container sees a complete filesystem, and the process can read and write any files it has access to. In a Windows Docker container the filesystem consists of a single C drive, and you'll see all the usual file paths in there - like C:Program Files and C:inetpub. In reality the C drive is composed of many parts, which Docker assembles into a virtual filesystem.

It's important to understand this. It's the basis for how images are shared between multiple containers, and it's the reason why data stored in a container is lost when the container is removed. The virtual filesystem the container sees is built up of many image layers which are read-only and shared, and a final writeable layer which is unique to the container:

When processes inside the container modify files from read-only layers, they're actually copied into the writeable layer. That layer stores the modified version and hides the original. The underlying file in the read-only layer is unchanged, so images don't get modified when containers make changes.

Removing a container removes its writeable layer and all the data in it, so that's not the place to store data if you run a stateful application in a container. You can store state in a volume, which is a separate storage location that one or more containers can access, and has a separate lifecycle to the container:

Storing State in Docker Volumes

Using volumes is how you store data in a Dockerized application, so it survives beyond the life of a container. You run your database container with a volume for the data files. When you replace your container from a new image (to deploy a Windows update or a schema change), you use the same volume, and the new container has all the data from the original container.

The SQL Server Docker lab on GitHub walks you through an example of this.

You define volumes in the Dockerfile, specifying the destination path where the volume is presented to the container. Here's a simple example which stores IIS logs in a volume:

You can build an image from that Dockerfile and run it in a container. When you run docker container inspect you will see that there is a mount point listed for the volume:

The source location of the mount shows the physical path on the Docker host where the files for the volume are written - in C:ProgramDatadockervolumes. When IIS writes logs from the container in C:Inetpublogs, they're actually written to the directory in C:ProgramDatadockervolumes on the host.

The destination path for a volume must be a new folder, or an existing empty folder. Docker on Windows is different from linux in that respect, you can't use a destination folder which already contains data from the image, and you can't use a single file as a destination.

Docker surfaces the destination directory for the volume as a symbolic link (symlink) inside the container, and that's where the trouble begins.

Symlink Directories

Symbolic links have been a part of the Windows filesystem for a long time, but they're nowhere near as popluar as they are in Linux. A symlink is just like an alias, which abstracts the physical location of a file or directory. Like all abstractions, it lets you work at a higher level and ignore the implementation details.

In Linux it's common to install software to a folder which contains the version name - like /opt/hbase-1.2.3 and then create a sylmink to that directory, with a name that removes the version number - /opt/hbase. In all your scripts and shortcuts you use the symlink. When you upgrade the software, you change the symlink to point to the new version and you don't need to change anything else. You can also leave the old version in place and rollback by changing the symlink.

You can do the same in Windows, but it's much less common. The symlink mechanism is how Docker volumes work in Windows. If you docker container exec into a running container and look at the volume directory, you'll it listed as a symlink directory (SYMLINKD) with a strange path:

The logs directory is actually a symlink directory, and it points to the path ?ContainerMappedDirectories8305589A-2E5D... The Windows filesystem understands that symlink, so if apps write directly to the logs folder, Windows writes to the symlink directory, which is actually the Docker volume on the host.

The trouble really begins when you configure your app to use a volume, and the application runtime tries to follow the symlink. Runtimes like Go, Java, PHP, NodeJS and even .NET will do this - they resolve the symlink to get the real directory and try to write to the real path. When the 'real' path starts with ?ContainerMappedDirectories, the runtime can't work with it and the write fails. It might raise an exception, or it might just silently fail to write data. Neither of which is much good for your stateful app.

DOS Devices to the Rescue

The solution - as always - is to introduce another layer of abstraction, so the app runtime doesn't directly use the symlink directory. In the Dockerfile you can create a drive mapping to the volume directory, and configure the app to write to the drive. The runtime just sees a drive as the target and doesn't try to do anything special - it writes the data, and Windows takes care of putting it in the right place.

I use the G drive in my Dockerfiles, just to distance it from the C drive. Ordinarily you use the subst utility to create a mapped drive, but that doesn't create a map which persists between sessions. Instead you need to write a registry entry in your Dockerfile to permanently set up the mapped drive:

This creates a fake G drive which maps to the volume directory C:data. Then you can configure your app to write to the G drive and it won't realise the target is a symlink, so it won't try to resolve the path and it will write correctly.

I use this technique in these Jenkins and Bonobo Dockerfiles, where I also set up the G drive as the target in the app configuration.

How you configure the storage target depends on the app. Jenkins uses an environment variable, which is very easy. Bonobo uses Web.config, which means running some XML updates with PowerShell in the Dockerfile. This technique means you need to mentally map the fake G drive to a real Docker volume, but it works with all the apps I've tried, and it also works with volume mounts.

Mounting Volumes

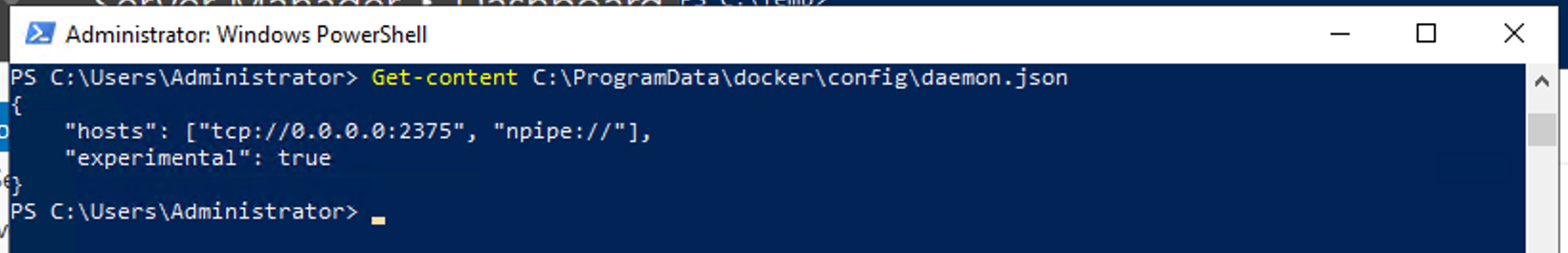

Docker volumes on Windows are always created in the path of the graph driver, which is where Docker stores all image layers, writeable container layers and volumes. By default the root of the graph driver in Windows is C:ProgramDatadocker, but you can mount a volume to a specific directory when you run a container.

I have a server with a single SSD for the C drive, which is where my Docker graph is stored. I get fast access to image layers at the cost of zero redundancy, but that's fine because I can always pull images again if the disk fails. For my application data, I want to use the E drive which is a RAID array of larger but slower spinning disks.

When I run my local Git server and Jenkins server in Docker containers I use a volume mount, pointing the Docker volume in the container to a location on my RAID array:

Actually I use a compose file for my services, but that's for a different post.

So now there are multiple mappings from the G drive the app uses to the Docker volume, and the underlying storage location:

Book Plug

Docker Compose Windows Server 2019 Versions

I cover volumes - and everything else to do with Docker on Windows - in my book Docker on Windows, which is out now.

If you're not into technical books, all the code samples are on GitHub: sixeyed/docker-on-windows and every sample has a Docker image on the Hub: dockeronwindows.

Use the G Drive For Now

I've hit this problem with lots of different app runtimes, so I've started to do this as the norm with stateful applications. It saves a lot of time to configure the G drive first, and ensure the app is writing state to the G drive, instead of chasing down issues later.

The root of the problem actually seems to be a change in the file descriptor for symlink directories in Windows Server 2016. Issues have been logged with some of the application runtimes to work correctly with the symlink (like in Go and in Java), but until they're fixed the G drive solution is the most robust that I've found.

Windows Server 2019 Install Docker Compose

It would be nice if the image format supported this, so you could write VOLUME G: in the Dockerfile and hide all this away. But this is a Windows-specific issue and Docker is a platform that works in the same way across multiple operating systems. Drive letters don't mean anything in Linux so I suspect we'll need to use this workaround for a while.

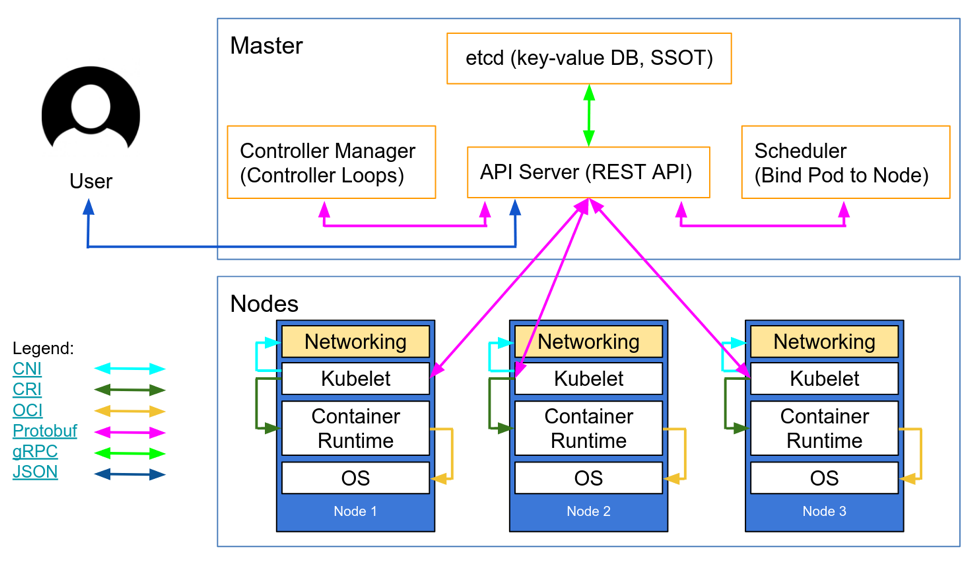

One of Docker’s goals has always been to provide the best experience working with containers from a Desktop environment, with an experience as close to native as possible whether you are working on Windows, Mac or Linux. We spend a lot of time working with the software stacks provided by Microsoft and Apple to achieve this. As part of this work, we have been closely monitoring Windows Subsystem for Linux (WSL) since it was introduced in 2016, to see how we could leverage it for our products.

The original WSL was an impressive effort to emulate a Linux Kernel on top of Windows, but there are such foundational differences between Windows and Linux that some things were impossible to implement with the same behavior as on native Linux, and this meant that it was impossible to run the Docker Engine and Kubernetes directly inside WSL. Instead, Docker Desktop developed an alternative solution using Hyper-V VMs and LinuxKit to achieve the seamless integration our users expect and love today.

Microsoft has just announced WSL 2 with a major architecture change: instead of using emulation, they are actually providing a real Linux Kernel running inside a lightweight VM. This approach is architecturally very close to what we do with LinuxKit and Hyper-V today, with the additional benefit that it is more lightweight and more tightly integrated with Windows than Docker can provide alone. The Docker daemon runs well on it with great performance, and the time it takes from a cold boot to have dockerd running in WSL 2 is around 2 seconds on our developer machines. We are very excited about this technology, and we are happy to announce that we are working on a new version of Docker Desktop leveraging WSL 2, with a public preview in July. It will make the Docker experience for developing with containers even greater, unlock new capabilities, and because WSL 2 works on Windows 10 Home edition, so will Docker Desktop.

As part of our shared effort to make Docker Desktop the best way to use Docker on Windows, Microsoft gave us early builds of WSL 2 so that we could evaluate the technology, see how it fits with our product, and share feedback about what is missing or broken. We started prototyping different approaches and we are now ready to share a little bit about what is coming in the next few months.

We will replace the Hyper-V VM we currently use by a WSL 2 integration package. This package will provide the same features as the current Docker Desktop VM: Kubernetes 1-click setup, automatic updates, transparent HTTP proxy configuration, access to the daemon from Windows, transparent bind mounts of Windows files, and more.

This integration package will contain both the server side components required to run Docker and Kubernetes, as well as the CLI tools used to interact with those components within WSL. We will then be able to introduce a new feature with Docker Desktop: Linux workspaces.

Linux Workspaces

When using Docker Desktop today, the VM running the daemon is completely opaque: you can interact with the Docker and Kubernetes API from Windows, but you can’t run anything within the VM except Docker containers or Kubernetes Pods.

With WSL 2 integration, you will still experience the same seamless integration with Windows, but Linux programs running inside WSL will also be able to do the same. This has a huge impact for developers working on projects targeting a Linux environment, or with a build process tailored for Linux. No need for maintaining both Linux and Windows build scripts anymore! As an example, a developer at Docker can now work on the Linux Docker daemon on Windows, using the same set of tools and scripts as a developer on a Linux machine:

A developer working on the Docker Daemon using Docker Desktop technical preview, WSL 2 and VS Code remote

Also, bind mounts from WSL will support inotify events and have nearly identical I/O performance as on a native Linux machine, which will solve one of the major Docker Desktop pain points with I/O-heavy toolchains. NodeJS, PHP and other web development tools will benefit greatly from this feature.

Combined with Visual Studio Code “Remote to WSL”, Docker Desktop Linux workspaces will make it possible to run a full Linux toolchain for building containers on your local machine, from your IDE running on Windows.

Performance

With WSL 2, Microsoft put a huge amount of effort into performance and resource allocations: The VM is setup to use dynamic memory allocation, and can schedule work on all the Host CPUs, while consuming as little (or as much) memory it requires – within the limits of what the host can provide, and in a collaborative manner towards win32 processes running on the host.

Docker Desktop will leverage this to greatly improve its resource consumption. It will use as little or as much CPU and memory as it needs, and CPU/Memory intensive tasks such as building a container will run much faster than today.

In addition, the time to start a WSL 2 distribution and the Docker daemon after a cold start is blazingly fast – within 2s on our development laptops, compared to tens of seconds in the current version of Docker Desktop. This opens the door to battery-life optimizations by deferring the daemon startup to the first API call, and automatically stop the daemon when it is not running any container.

Zero-configuration bind mount support

One of the major issues users have today with Docker Desktop – especially in an enterprise environment – is the reliability of Windows file bind mounts. The current implementation relies on Samba Windows service, which may be deactivated, blocked by enterprise GPOs, blocked by 3rd party firewalls etc. Docker Desktop with WSL 2 will solve this whole category of issues by leveraging WSL features for implementing bind mounts of Windows files. It will provide an “it just works” experience, out of the box.

Thanks to our collaboration with Microsoft, we are already hard at work on implementing our vision. We have written core functionalities to deploy an integration package, run the daemon and expose it to Windows processes, with support for bind mounts and port forwarding.

A technical preview of Docker Desktop for WSL 2 will be available for download in July. It will run side by side with the current version of Docker Desktop, so you can continue to work safely on your existing projects. If you are running the latest Windows Insider build, you will be able to experience this first hand. In the coming months, we will add more features until the WSL 2 architecture is used in Docker Desktop for everyone running a compatible version of Windows.